April 04, 2026

API Security and Rate Limiting: Four Layers Between the API and an Attack

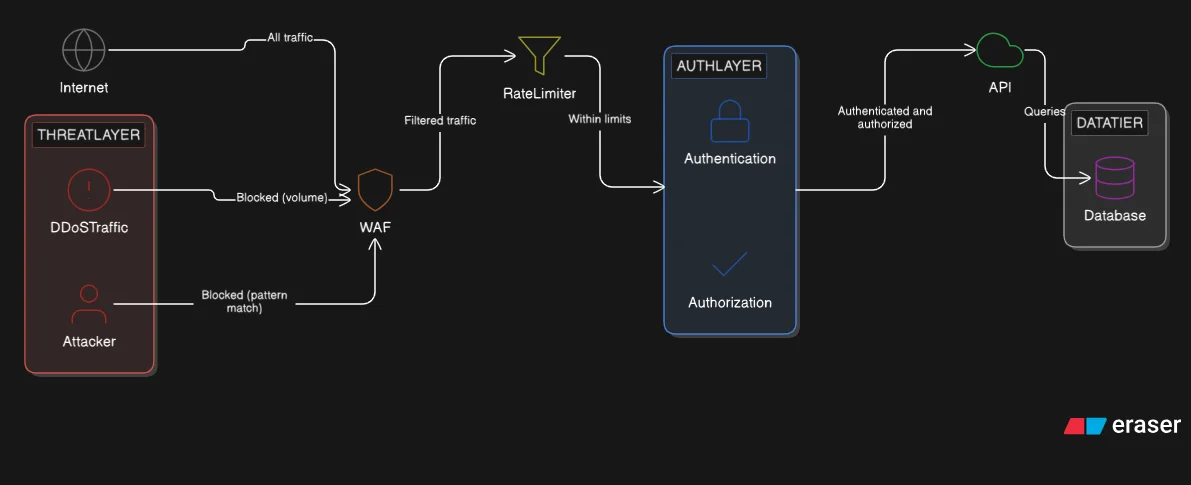

An open API receives requests from anyone. Authentication, authorization, rate limiting, and attack pattern defenses are four distinct layers. Failing any one of them compromises the others.

An open API receives requests from anyone on the internet. The three styles from the previous post (REST, GraphQL, and gRPC) each define what can be requested and what shape the response takes. None of them define who is allowed to ask, how often, or what payloads are safe to process.

Four layers address those questions. Authentication answers: who is making this request? Authorization answers: what is that caller allowed to do? Rate limiting answers: how much traffic is that caller allowed to generate? Attack pattern defenses answer: what input should the system refuse to process regardless of who sends it? Each layer addresses a different threat. Failing any one of them weakens the others: a correctly authorized user can still inject malicious SQL; a correctly authenticated caller can still send ten thousand requests per second.

Authentication

Authentication is the first check on every request. Before any resource is returned, the system must establish who is calling.

API keys. A static token issued to a specific client. The client sends the key in every request, typically in the Authorization or X-API-Key header. The server looks up the key and either accepts or rejects the request. API keys carry no user identity: they represent a known caller, not a known person. They are appropriate for service-to-service communication and developer-facing APIs where the client is a controlled system, not an interactive user.

Bearer tokens and JWT (JSON Web Tokens). After a user presents valid credentials, the server issues a signed token. JWT is the standard format: a self-contained structure that carries claims inside the token body: user ID, roles, expiry time, and the issuing server's identifier. The receiving server validates the signature without a database lookup. The token is stateless: no session is stored server-side, which means any server in the pool can validate any token independently. Access tokens are short-lived, typically minutes to an hour. Refresh tokens are long-lived and have one purpose: obtaining a new access token when the current one expires. The refresh token is never sent to resource endpoints, only to the token-issuing endpoint. The server stores refresh tokens so they can be revoked.

OAuth2. OAuth2 is a delegation protocol. A user authorizes a third-party application to access their resources without revealing their credentials. The authorization server issues a scoped access token that represents only the specific permissions the user approved. The third-party application receives the token, not the password. OAuth2 handles the authorization layer: "Connect to GitHub" is a canonical example, where a third-party application receives a scoped token representing the permissions the user approved. "Login with Google" is OpenID Connect, which adds an identity token on top of the OAuth2 authorization flow, carrying user identity claims alongside the authorization grant.

Authorization

Authentication establishes identity. Authorization determines what that identity can do.

RBAC (Role-Based Access Control). Users are assigned roles (admin, editor, viewer) and each role carries a defined set of permissions. A viewer can read resources. An editor can read and write. An admin can do both and manage other users. The permissions are attached to the role, not the individual. Adding a new user to the admin role grants all admin permissions immediately. RBAC is simple, predictable, and the correct default for most applications. It is the model behind GitHub repository permissions, CMS tools, and team management software.

ABAC (Attribute-Based Access Control). Authorization decisions are based on attributes of the user, the resource, and the environment simultaneously. A policy might express: allow access if the user's department is HR and the resource is classified internal and the request arrives during business hours. ABAC is more flexible than RBAC: it can enforce conditions that no fixed role structure can express. It is also more complex to define, audit, and debug. Policy conflicts are common when attribute combinations are not carefully managed. In practice, RBAC and ABAC are combined: roles provide coarse-grained access, attributes refine it.

ACL (Access Control List). Each resource carries its own permission list specifying which users can access it and at what level. User A has read-only access to a specific document. User B has read and write access to the same document. User C has no access. Google Drive is the canonical example: every document has an independent sharing configuration. ACLs give fine-grained, user-centric control over individual resources. The limitation is scale: managing millions of ACL entries across millions of objects becomes operationally expensive without careful tooling.

Rate Limiting

Rate limiting controls how many requests a client can make within a given time period. Without it, a single caller can consume all available server capacity, whether through a deliberate attack or a misconfigured client sending requests in a tight loop.

Limits apply at different granularities. Per-IP limiting blocks a specific network address once it exceeds the threshold. Per-user limiting tracks authenticated callers independently of their IP, which handles clients that rotate addresses. Per-endpoint limiting sets stricter thresholds on expensive or sensitive operations: a login endpoint has a tighter limit than a read endpoint. Global limiting caps total traffic regardless of source: even if every individual IP stays under its per-IP limit, a coordinated attack with thousands of bots can exceed what the system can handle. The global limit is the fallback when distributed sources individually appear legitimate.

Three algorithms implement the counting logic, each with different trade-offs.

Fixed window. Requests are counted within a fixed time boundary: for example, 100 requests per minute. At the top of each minute, the count resets. Fixed window is simple to implement and simple to reason about. The weakness is the boundary burst: a caller can send 100 requests in the last second of one window and 100 requests in the first second of the next window, producing 200 requests in two seconds without violating either window's limit.

Sliding window. Instead of resetting at fixed boundaries, the window moves continuously. At any moment, the count covers the most recent 60 seconds of requests, regardless of where those seconds fall relative to a clock minute. The boundary burst problem is eliminated: there is no boundary. The cost is higher memory: the system must store a timestamp for each recent request rather than a single counter.

Token bucket. Each caller is assigned a bucket with a maximum token capacity. Tokens replenish at a fixed rate: for example, one token per second up to a maximum of 60. Each incoming request consumes one token. When the bucket is empty, the request is rejected. Requests are accepted again as soon as any tokens have replenished: a caller does not wait for a full bucket, only for the next token. Token bucket allows short bursts up to the bucket capacity while enforcing a sustainable long-run average rate. It is the production standard: Stripe, GitHub, and AWS API Gateway all use token bucket semantics. The bucket capacity controls burst tolerance; the replenishment rate controls the sustained throughput ceiling.

Common Attack Patterns

Three attack types bypass authentication and authorization entirely if the application mishandles input. No amount of JWT validation prevents them.

SQL injection. User input is embedded directly into a database query string. An attacker crafts input that terminates the intended query and appends their own, reading arbitrary data, deleting tables, or bypassing authentication checks entirely. The fix is parameterized queries: the query structure is defined at compile time and user input is passed as a typed parameter, not concatenated into the query string. The database treats the parameter as data, never as SQL syntax. ORMs apply parameterization by default. Raw string concatenation in a query is the mistake.

CSRF (Cross-Site Request Forgery). A logged-in user visits a malicious website. That website causes the user's browser to submit a request to the legitimate API, carrying the user's session cookie automatically. The API cannot distinguish this forged request from a genuine user action based on the cookie alone: both carry valid credentials. The fix is a CSRF token: a unique, unpredictable value embedded in the legitimate application's forms and verified server-side on every state-modifying request. The token is tied to the user's session but is not automatically sent by the browser the way a cookie is. A cross-origin attacker can cause the browser to send the request, but cannot read the page to extract the token, and therefore cannot include it in a forged request.

XSS (Cross-Site Scripting). An attacker stores a malicious script in application data: a comment field, a profile name, an order note. When another user loads the page containing that data, the browser executes the injected script in the victim's session. The script can steal cookies, read page content, or make requests on the victim's behalf. The fix is output sanitization: every piece of user-submitted content is escaped before being rendered in HTML. Escaped content displays as literal text; the browser never parses it as executable code. A Content Security Policy (CSP) header provides a second layer: it instructs the browser which script sources are permitted, blocking inline script execution even if escaped content is accidentally rendered as code.

Defense in Depth

Authentication, authorization, and input validation operate within the application. Rate limiting typically operates at the gateway or middleware layer, before requests reach application code. Three additional layers operate in front of all of them.

TLS (Transport Layer Security). All traffic between clients and the API travels over HTTPS. TLS encrypts data in transit, preventing interception and tampering. It is not optional. An API that accepts HTTP without TLS exposes authentication tokens, session cookies, and sensitive payloads to any network observer on the path.

WAF (Web Application Firewall). A WAF inspects incoming HTTP traffic against a ruleset of known attack signatures: SQL keywords in URL parameters, script tags in request bodies, malformed HTTP methods, abnormally large payloads. Requests matching attack patterns are rejected before they reach the application. AWS WAF, Cloudflare WAF, and equivalent products apply this filtering at the network edge.

VPN (Virtual Private Network). Internal APIs (admin dashboards, internal service endpoints, monitoring tools) have no reason to accept connections from the public internet. A VPN restricts access to a specific authenticated network. Callers outside the network cannot reach these endpoints regardless of whether they hold valid credentials. The attack surface is reduced to zero for all external actors.

No single layer is sufficient. Each addresses a different threat vector. TLS does not prevent SQL injection. Rate limiting does not prevent CSRF. WAF signature matching does not prevent a legitimate authenticated user from abusing their access. The combination of all layers is what constitutes a defended API.

Takeaways

- Authentication establishes identity. Three mechanisms: API keys (static, service-to-service), JWT bearer tokens (self-contained claims, stateless, access plus refresh token pattern), OAuth2 (delegation protocol for third-party access with scoped permissions).

- Authorization determines what the authenticated identity can do. Three models: RBAC (roles with defined permissions, the practical default), ABAC (attribute-based policies, more flexible and more complex), ACL (per-resource permission lists, fine-grained but operationally expensive at scale).

- Rate limiting controls request volume. Limits apply per-IP, per-user, per-endpoint, and globally. Token bucket is the production standard: it allows burst traffic up to bucket capacity and enforces a sustainable average rate through token replenishment.

- Three input-handling vulnerabilities require explicit fixes regardless of authentication state: SQL injection (parameterized queries), CSRF (CSRF tokens on state-modifying requests), XSS (output sanitization before rendering).

- Defense in depth means layering controls at multiple points: TLS for transit, WAF for traffic filtering, VPN for network-level restriction, rate limiting at the gateway, and input validation inside the application.

Security controls who can call the API and at what rate. As traffic grows and the API absorbs more authenticated request volume, the database becomes the next constraint. A single database node handling all reads eventually saturates. Replication distributes that read load across multiple copies. That is the next problem.